Math Competition Records

MCP — Math Competition Points

A Unified Ranking System for High School Math Competitors

- 1. Motivation

- 2. Competition Tiers

- 3. Point Distribution

- 4. Subject Tests and Sub-Events

- 5. Time Decay and Rolling Window

- 6. Special Rules

- 7. Aggregation and Final Score

- 8. Worked Example: HMMT February over 4 Years

- 9. Competition Configuration

- 10. Data Pipeline

- 11. MCP %

- 12. Limitations

- 13. Summary

- Document History

- Disclaimer

1. Motivation

There is no single, comprehensive ranking of competitive math students in the United States. Students compete across a fragmented landscape — HMMT, PUMaC, AMO, ARML, BMT, MathCounts, and many more — but no system aggregates these results into a coherent picture of a student’s competitive strength.

Professional tennis solved an analogous problem decades ago. The ATP (men’s) and WTA (women’s) ranking systems assign points based on tournament tier and finishing position, computed over a rolling window. This gives fans, coaches, and players a transparent, up-to-date measure of who is performing at the highest level.

MCP (Math Competition Points) adapts this model for competitive mathematics. By categorizing competitions into tiers, assigning points based on placement, and applying time decay, MCP produces a single number that reflects a student’s recent, sustained performance across the most prestigious math competitions in the country.

Why model after ATP / WTA?

- Proven and intuitive. The tiered tournament system is well-understood and has been successfully used in tennis for over 50 years.

- Rewards breadth and consistency. A student who performs well at multiple competitions is ranked higher than one with a single strong result — just as in tennis, a Grand Slam winner who skips other tournaments will be outranked by a player who consistently performs across all events.

- Handles heterogeneous events. Not all competitions are equally difficult or prestigious. The tier system naturally accounts for this without requiring score normalization across competitions with different formats and grading schemes.

- Time-relevance. The rolling window and decay function ensure that the ranking reflects current ability, not historical peak performance.

2. Competition Tiers

Competitions are classified into four tiers — 2000, 1000, 500, and 250 — based on difficulty, prestige, selectivity, and the caliber of the contestant pool. The tier number represents the maximum points awardable for a first-place finish.

Tier 2000 — Grand Slam

| Competition | Ranked Students | Notes |

|---|---|---|

| IMO | 6 | International Mathematical Olympiad — US team only. |

| EGMO | 4 | European Girls’ Mathematical Olympiad — US team only. Counted toward MCP-W only (see Section 6). |

| RMM | ~6 | Romanian Masters of Mathematics — US participants only. |

Why Grand Slam? These are the pinnacle international olympiads. Participation is invitation-only and extremely selective — students earn their spot through national competitions (AMO, JMO). A first-place finish among US participants at IMO, EGMO, or RMM represents the highest achievement in competitive mathematics and warrants the maximum MCP value (2000 points).

Tier 1000 — Premier Competitions

| Competition | Ranked Students | Notes |

|---|---|---|

| HMMT February | ~50 | Overall individual and subject (Algebra & NT, Combinatorics, Geometry) rankings. The most competitive invitational in the US. |

| PUMaC Division A | 30~45 | Overall individual and subject (Algebra, Combinatorics, Geometry, Number Theory) rankings. Elite division of Princeton’s competition. |

| ARML Individual | ~64 | Individual round ranking at the American Regions Math League. |

| USAMO | ~150 | USA Mathematical Olympiad. The pinnacle national olympiad. Awards only (no individual ranks). |

Why these are Tier 1000: These represent the most difficult and prestigious open competitions available to US high school students. HMMT February and PUMaC Division A draw the strongest fields in the country. USAMO is the national olympiad. ARML’s individual round, while part of a team-oriented event, ranks students individually against the entire national field.

Tier 500 — Major Competitions

| Competition | Ranked Students | Notes |

|---|---|---|

| HMMT November | ~50 | Overall individual and subject (General, Theme) rankings. |

| PUMaC Division B | ~36 | Overall individual and subject (Algebra, Combinatorics, Geometry, Number Theory) rankings. |

| USAJMO | ~150 | USA Junior Mathematical Olympiad. Awards only (no individual ranks). |

| BMT | ~10 | Berkeley Math Tournament — overall individual and subject (Algebra, Calculus, Discrete, Geometry) rankings. |

| SMT | ~10 | Stanford Math Tournament — General plus subject rounds (Algebra, Calculus, Discrete, Geometry). General does not earn MCP. Subject rounds earn MCP for all three award tiers: Top Scores, Distinguished HM (Top 10%), and Honorable Mention (Top 25%). |

| CMIMC | ~10 | Carnegie Mellon competition — overall individual and subject (Algebra & NT, Combinatorics & CS, Geometry) rankings. |

| BAMO-12 | ~25 | Bay Area Mathematical Olympiad, high school division. |

| MathCounts National | ~56 | National ranking. Special rules apply (see Section 5). |

| MPFG | ~75 | Math Prize for Girls. Counted toward MCP-W only (see Section 6). |

| MPFG-Olympiad | ~32 | MPFG Olympiad round. Counted toward MCP-W only (see Section 6). |

Why these are Tier 500: These competitions are highly respected but either draw a somewhat less elite field than Tier 1000, or serve a specific sub-population. HMMT November is a strong competition but is explicitly positioned as a step below HMMT February. PUMaC Division B is the standard division below Division A. USAJMO targets students who qualify but not at the AMO level. BMT is a major West Coast tournament with a strong field. CMIMC and BAMO-12 are strong regionals with competitive fields.

Tier 250 — Competitive Regionals

| Competition | Ranked Students | Notes |

|---|---|---|

| MMATHS | ~99 | Yale’s math competition. |

| DMM | ~50 | Duke Math Meet. |

| CMM | ~10 | Caltech Math Meet. |

| BAMO-8 | ~30 | Bay Area Mathematical Olympiad, middle school division. |

| BrUMO Division A | ~25 | Brown University Math Olympiad Division A. Rankings from top 1 through DHM (Top 10%). |

| JHMT | ~25–40 | Johns Hopkins Math Tournament — high school individual (published winners and honorable mentions). Student-run; Baltimore-area field with national attendees. |

| EMCC | 10–20 | Exeter Math Club Competition — middle school individual rankings. |

Why these are Tier 250: These are well-run competitions with good problems but draw smaller or more geographically concentrated fields. They provide valuable competitive experience and meaningful results, but a strong finish here carries less weight than the same finish at a national-level event.

3. Point Distribution

Unlike tennis tournaments, where players are eliminated in discrete rounds (R32, R16, QF, SF, F), math competitions produce exact integer rankings. A student who finishes 5th performed measurably better than the student who finishes 6th, and their points should reflect that. Grouping ranks into tiers (as tennis does) would throw away this precision.

Step 1: Normalize to mcp_rank

Before computing points, all results are normalized to a single numeric mcp_rank using the average-rank-for-ties method. This unifies rank-based and award-based competitions into a single system.

For rank-based competitions (HMMT, PUMaC, ARML, etc.): If multiple students share the same rank, their mcp_rank is the average of the positions they span. For example, if 3 students are tied at rank 5, they occupy positions 5, 6, 7, so mcp_rank = (5+6+7)/3 = 6.

For award-based competitions (USAMO, USAJMO, MPFG-Olympiad): Awards are mapped to positional blocks. For example, if USAMO 2025 has 20 Gold, 12 Silver, 55 Bronze, and 68 HM:

- Gold: positions 1–20 →

mcp_rank = 10.5 - Silver: positions 21–32 →

mcp_rank = 26.5 - Bronze: positions 33–87 →

mcp_rank = 60 - HM: positions 88–155 →

mcp_rank = 121.5

For mixed-format competitions (BAMO-12, BAMO-8, BrUMO Division A): Numeric ranks come first, followed by a non-numeric group. BAMO uses Honorable Mention; BrUMO uses DHM (Top 10%).

For MathCounts National: Numeric ranks (1, 2) → Semi-finalists (S) → Quarter-finalists (Q) → Countdown 9–12 (C) → remaining numeric ranks (13+). Each code group is treated as a tied block.

Step 2: Compute mcp_points

Grand Slam competitions (IMO, EGMO, RMM): Because these competitions are so selective, points are awarded by medal rather than by rank interpolation. No power-law curve is used.

- Gold: full tier value (2000)

- Silver: tier value × 75% (1500)

- Bronze: tier value × 50% (1000)

The standard time-decay rule (Section 5) still applies.

All other competitions: Every mcp_rank is converted to points via a power-law curve between a maximum and a floor:

| Variable | Description |

|---|---|

r |

The current rank being calculated |

max_pts |

The maximum points awarded (at Rank 1) = Tier × weight |

min_pts |

Calculated (see below) — the floor at Rank N |

N |

The total size of the competition (all participants) |

k |

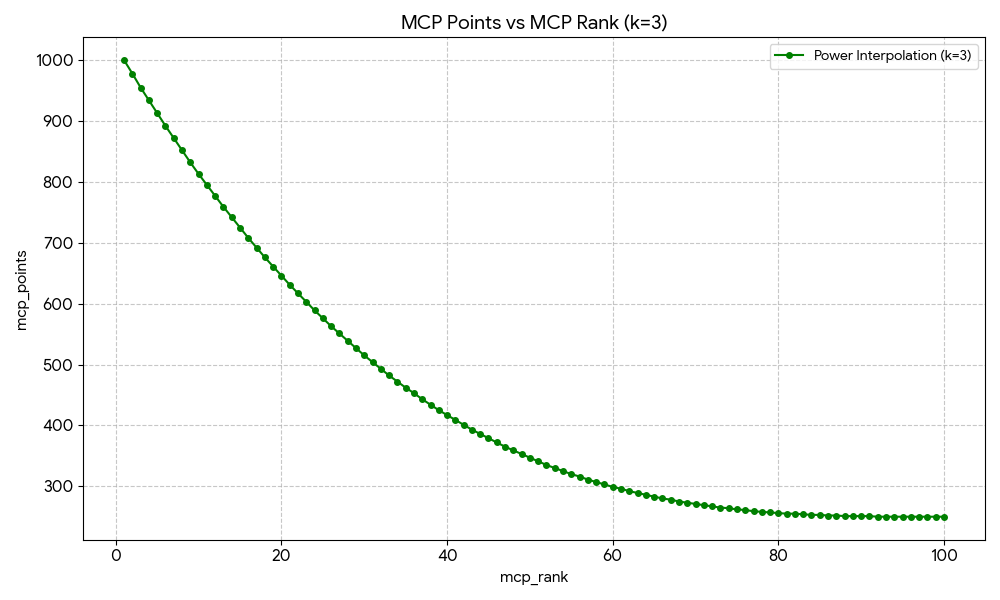

The steepness coefficient (3) |

Derived values:

max_pts=Tier × weight(1000 for Tier 1000 overall, 500 for Tier 1000 subject tests at 50%)min_ptsis decided by whether the competition has a selection process:- No selection process (open competition):

min_pts= 10. Examples: ARML, BAMO, DMM, CMIMC, MMATHS, CMM, BMT, BrUMO, JHMT. - Selection process (selective competition):

min_pts= 100 or 200. Examples: HMMT February, MPFG, MPFG-Olympiad, MathCounts National use 100; USAMO, USAJMO use 200. Seescripts/build_search_data.pyMCP_V2_PARAMSfor the authoritative values per contest.

- No selection process (open competition):

Awardees only receive points. Since we only know the awardees, we only calculate and assign points for the awardees. Students whose ranks are unknown (e.g., ranks 51–2000 when only top 50 are recognized) receive 0 points.

Full competition records. The same algorithm applies when full competition records become available. Whether we have partial data (top 50 of 2000) or complete data (all 2000 ranks), the formula, min_pts calculation, and point distribution remain identical. No migration or recalibration is needed.

Between the anchors, the steepness coefficient $k$ controls how quickly points drop off. With $k = 3$, the curve is convex — top ranks are separated by large point gaps while lower ranks are compressed together near the floor:

Try the formula interactively: Desmos calculator.

Why this formula?

- Reflects true competition size. Using the actual competition size (N) rather than the number of awardees means a student who placed 50th among 2,000 is scored appropriately, not as if they were 50th among 50.

- Fair across different publication practices. HMMT publishes top 50; BMT publishes top 50%; USAMO publishes only awards. Dynamic

min_ptsadjusts for each competition’s structure. - Accounts for selection. Selective competitions (USAMO, HMMT, MPFG) have pre-qualified fields. A higher floor for these ensures even the last qualifier gets points that reflect an elite field.

- Fixed endpoints. Rank 1 = max_pts; the theoretical floor at Rank N = min_pts. The two anchor points are always respected.

- Handles ties naturally. Fractional

mcp_rankvalues (from averaged ties) plug directly into the formula. Tied students earn identical points.

Worked Example (Power-Law Formula)

| Parameter | Value |

|---|---|

| Competition size (N) | 2000 |

| Number of awardees (A) | 50 (top 50 only) |

| Tier | 1000 |

max_pts |

1000 |

min_pts |

10 |

Anchor points:

- Rank 1: 1000 points

- Rank 2000: 10 points (theoretical floor; we have no data for this rank)

Rank 50 (last awarded rank): $\text{mcp}_\text{points}(50) = 10 + (1000 - 10) \times \left(\frac{2000 - 50}{2000 - 1}\right)^3 \approx 929$

Ranks 51–2000: No official recognition → 0 points.

Estimated Competition Sizes

The algorithm requires the total competition size (N) and min_pts for each event. The canonical source is MCP_V2_PARAMS in scripts/build_search_data.py. Below are the values used in the implementation (N and min_pts may vary by year for some contests, e.g. BMT 2023 uses N=270).

| Competition | Tier | Est. Size (N) | Awardees | Selection | min_pts |

|---|---|---|---|---|---|

| HMMT February | 1000 | ~800 | ~50 | Selective (invitation/registration) | 100 |

| HMMT November | 500 | ~720 | ~50 | Open | 10 |

| PUMaC Division A | 1000 | ~180 | ~40–45 | Open | 10 |

| PUMaC Division B | 500 | ~180 | ~32–48 | Open | 10 |

| BMT Individual | 500 | ~630 | ~135–315 | Open | 10 |

| ARML Individual | 1000 | ~1,600 | ~45–65 | Open | 10 |

| USAMO | 1000 | ~280 | ~135–155 | Selective (AMC/AIME qualifiers) | 200 |

| USAJMO | 500 | ~220 | ~143–166 | Selective (AMC/AIME qualifiers) | 200 |

| CMIMC | 500 | ~200 | ~10 | Open | 10 |

| BAMO-12 | 500 | ~240 | ~25–36 | Open | 10 |

| BAMO-8 | 250 | ~420 | ~30–35 | Open | 10 |

| MathCounts National | 500 | 224 | 56 | Selective (state qualifiers) | 100 |

| MPFG | 500 | ~275 | ~60–75 | Selective (AMC qualifiers) | 100 |

| MPFG-Olympiad | 500 | ~75 | ~20–32 | Selective (MPFG invitees) | 100 |

| MMATHS | 250 | ~750 | ~99–105 | Open | 10 |

| DMM | 250 | ~270 | ~51 | Open | 10 |

| CMM | 250 | ~60 | ~10 | Open | 10 |

| BrUMO Division A | 250 | ~300 | ~22–24 | Open | 10 |

| JHMT | 250 | ~150 | ~30–40 (published) | Open | 10 |

| EMCC | 250 | ~150 | 10–20 (published) | Open | 10 |

Note: Open = min_pts 10; Selective = min_pts 100 or 200. See MCP_V2_PARAMS in scripts/build_search_data.py for the authoritative N and min_pts per contest and year.

4. Subject Tests and Sub-Events

Many competitions include both an overall individual ranking and separate subject-level rounds (e.g., HMMT February has Algebra & Number Theory, Combinatorics, and Geometry; PUMaC has Algebra, Geometry, Combinatorics, Number Theory; BMT has Algebra, Calculus, Discrete, Geometry; CMIMC has Algebra & NT, Combinatorics & CS, Geometry).

Both overall and subject results count, at different weights:

- The overall individual ranking counts at 100% of the competition’s tier value.

- Each subject test counts at 50% of the competition’s tier value.

Subject tests use the same unified formula from Section 3 with weight = 0.5:

max_pts = Tier × 0.5(e.g. 500 for a Tier 1000 subject test)min_ptsfollows the same dynamic rule as overall (10 for open, higher for selective competitions)

The mcp_rank column is pre-computed and stored in each subject test CSV, just like overall results. mcp_points is computed dynamically at build time.

Competitions with Subject Tests

| Competition | Overall (Ranked) | Subject Tests (Ranked per subject) |

|---|---|---|

| HMMT February | hmmt-feb ~50 (100%) |

Algebra & NT ~50, Combinatorics ~56, Geometry ~50 (50% each) |

| HMMT November | hmmt-nov ~50 (100%) |

General ~82, Theme ~50 (50% each) |

| PUMaC Division A | pumac-a ~44 (100%) |

pumac-a-algebra ~30, pumac-a-combinator ~31, pumac-a-geometry ~32, pumac-a-number-theory ~32 (50% each) |

| PUMaC Division B | pumac-b ~36 (100%) |

Algebra ~34, Combinatorics ~31, Geometry ~51, Number Theory ~30 (50% each) |

| BMT | bmt ~10 (100%) |

Algebra ~11, Calculus ~12, Discrete ~10, Geometry ~12 (50% each) |

| SMT | General (no MCP) | Algebra, Calculus, Discrete, Geometry (50% each; Top + DHM + HM all earn MCP) |

| CMIMC | cmimc ~10 (100%) |

Algebra & NT ~10, Combinatorics & CS ~10, Geometry ~10 (50% each) |

5. Time Decay and Rolling Window

Rolling Window

MCP considers results from the most recent 4 competition years. Results older than 4 years are dropped entirely.

Decay Schedule

More recent results carry greater weight. The decay follows a geometric halving:

| Recency | Weight |

|---|---|

| Current year (most recent) | 100% |

| 1 year prior | 50% |

| 2 years prior | 25% |

| 3 years prior | 12.5% |

Formula: For a result earned y years ago (where y = 0 is the current year):

Why geometric decay?

- Smooth and predictable. Each year’s weight is exactly half the previous year’s, making the system easy to understand and compute.

- Reflects improvement trajectories. Competitive math students improve rapidly. A student who scored well as a sophomore but is now a senior should be judged primarily on recent performance.

- Avoids cliff effects. Unlike a hard cutoff (e.g., “only last 2 years count”), geometric decay ensures that older results fade gradually rather than vanishing overnight.

- Mirrors ATP/WTA philosophy. Tennis rankings use a rolling 52-week window with full weight, but the principle of recency-weighting is the same.

6. Special Rules

6a. MathCounts National — No Time Limit, Equal Weight

MathCounts National is classified as a Tier 500 competition with special treatment:

- No rolling window. All historical MathCounts National results are counted, regardless of age.

- Equal weight across all years. No time decay is applied. A 1st-place finish in 2019 earns the same points as a 1st-place finish in 2025.

Why?

MathCounts is a middle school competition (grades 6–8). Students compete in it during a narrow window of their mathematical development and cannot return to it later. Unlike high school competitions, where a student can compete at HMMT for 4 consecutive years, MathCounts results are inherently limited to a student’s middle school years.

Applying time decay would systematically disadvantage older students who performed well at MathCounts years ago — even though their MathCounts achievement remains a meaningful signal of mathematical talent. In tennis terms, MathCounts is like a junior Grand Slam: the result stands on its own merits regardless of when it occurred.

Additionally, strong MathCounts performers who transition into high school competitions benefit from having their earlier accomplishments reflected in their MCP. This creates a more complete picture of a student’s mathematical trajectory.

6b. MPFG and MCP-W — Separate Women’s Ranking

The Math Prize for Girls (MPFG), MPFG-Olympiad, and EGMO are competitions open only to girls. Points from these competitions are not included in the overall MCP ranking. Instead, they contribute to a separate MCP-W (MCP for Women) ranking.

MCP-W is calculated as follows:

- Take the student’s full MCP score (from all open competitions).

- Add points earned from MPFG, MPFG-Olympiad, and EGMO (subject to the standard 4-year rolling window and decay).

- The sum is the student’s MCP-W score.

Why a separate ranking?

- Fairness. MPFG, MPFG-Olympiad, and EGMO are restricted to female participants. Including these points in the overall MCP would create opportunities unavailable to male students, distorting the ranking. In tennis, the ATP and WTA maintain separate rankings for the same reason.

- Visibility. A dedicated MCP-W ranking highlights the achievements of female math competitors. Women are significantly underrepresented in competitive mathematics, and a visible ranking system can help recognize and encourage participation.

- No penalty. Female students are not penalized — they receive full MCP from all open competitions. MCP-W is purely additive: it can only increase (or equal) their overall MCP.

- Analogous to WTA. Just as the WTA rankings exist alongside the ATP rankings, MCP-W exists alongside MCP, serving the same purpose of recognition and motivation.

7. Aggregation and Final Score

A student’s MCP is the sum of their effective (decay-weighted) points across all eligible competitions:

\[\text{MCP} = \sum_{c \in \text{competitions}} \sum_{y=0}^{3} \text{mcp}\_\text{points}(c, y) \times \left(\frac{1}{2}\right)^y\]with the exception of MathCounts, which uses:

\[\text{MCP}\_{\text{MathCounts}} = \sum_{y \in \text{all years}} \text{mcp}\_\text{points}(\text{MathCounts}, y) \times 1.0\]There is no cap on the number of competitions counted. A student who competes broadly and performs well everywhere will be rewarded, just as in tennis.

Only mcp_rank is stored in the competition CSV files. mcp_points and mcp_contrib are computed dynamically at build time using each competition’s tier, weight, and the geometric interpolation formula. The final output includes:

- Per-record

mcp_points: the raw points earned for that result (before time decay). - Per-record

mcp_contrib: the time-weighted points this result contributes to the student’s total MCP. Computed asmcp_points × decay_weight. Only present when positive. - Per-student

mcp: the sum of allmcp_contribvalues from open competitions. - Per-student

mcp_w(all female students):mcp+ decay-weighted MPFG/MPFG-Olympiad/EGMO points. Present for every female student, even those without MPFG or EGMO results (in which casemcp_wequalsmcp).

MCP-W (for female students)

\[\text{MCP-W} = \text{MCP} + \sum_{c \in \lbrace\text{MPFG, MPFG-Olympiad, EGMO}\rbrace} \sum_{y=0}^{3} \text{mcp}\_\text{points}(c, y) \times \left(\frac{1}{2}\right)^y\]8. Worked Example: HMMT February over 4 Years

Consider a student who competed at HMMT February (Tier 1000) from 2023 to 2026, steadily improving. The overall individual ranking has ~50 students (N=50), and each of the three subject tests (Algebra & NT, Combinatorics, Geometry) counts at 50% weight (max 500, min 250).

Points per event in the current year (2026):

| Event | Weight | Rank | mcp_points |

|---|---|---|---|

| Overall | 100% | 3 | 941 |

| Algebra & NT | 50% | 5 | 444 |

| Combinatorics | 50% | 10 | 386 |

| Geometry | 50% | 2 | 485 |

All 4 years with time decay:

| Year | Overall | Alg & NT | Combo | Geometry | Raw Total | Decay | Effective |

|---|---|---|---|---|---|---|---|

| 2026 | 941 (rank 3) | 444 (rank 5) | 386 (rank 10) | 485 (rank 2) | 2256 | 100% | 2256.00 |

| 2025 | 815 (rank 8) | 386 (rank 10) | 341 (rank 15) | 444 (rank 5) | 1986 | 50% | 993.00 |

| 2024 | 682 (rank 15) | 341 (rank 15) | 307 (rank 20) | 386 (rank 10) | 1716 | 25% | 429.00 |

| 2023 | 566 (rank 25) | 307 (rank 20) | 267 (rank 30) | 341 (rank 15) | 1481 | 12.5% | 185.13 |

| Total | 3863.13 |

This student’s MCP contribution from HMMT February alone is 3863.13. Their full MCP score would also include decay-weighted points from any other competitions they entered (ARML, PUMaC, AMO, etc.) within the 4-year window.

9. Competition Configuration

All MCP parameters are centralized in a single configuration file. Each competition defines its tier, weight, ranking mode (how raw data is converted to mcp_rank), and which CSV column holds the raw ranking data.

Result files are discovered dynamically — all result CSVs within each competition-year directory are processed.

| Competition | Tier | Weight | Mode |

|---|---|---|---|

| IMO | 2000 | 100% | rank |

| EGMO (→ MCP-W) | 2000 | 100% | rank |

| RMM | 2000 | 100% | rank |

| HMMT February (overall) | 1000 | 100% | rank |

| HMMT Feb — Algebra & NT | 1000 | 50% | rank |

| HMMT Feb — Combinatorics | 1000 | 50% | rank |

| HMMT Feb — Geometry | 1000 | 50% | rank |

| PUMaC Division A (overall) | 1000 | 100% | rank |

| PUMaC A — Algebra | 1000 | 50% | rank |

| PUMaC A — Combinatorics | 1000 | 50% | rank |

| PUMaC A — Geometry | 1000 | 50% | rank |

| PUMaC A — Number Theory | 1000 | 50% | rank |

| BMT Individual (overall) | 500 | 100% | bmt |

| BMT — Algebra | 500 | 50% | bmt |

| BMT — Calculus | 500 | 50% | bmt |

| BMT — Discrete | 500 | 50% | bmt |

| BMT — Geometry | 500 | 50% | bmt |

| SMT — Algebra | 500 | 50% | smt |

| SMT — Calculus | 500 | 50% | smt |

| SMT — Discrete | 500 | 50% | smt |

| SMT — Geometry | 500 | 50% | smt |

| ARML Individual | 1000 | 100% | rank |

| USAMO | 1000 | 100% | award |

| HMMT November (overall) | 500 | 100% | rank |

| HMMT Nov — General | 500 | 50% | rank |

| HMMT Nov — Theme | 500 | 50% | rank |

| PUMaC Division B (overall) | 500 | 100% | rank |

| PUMaC B — Algebra | 500 | 50% | rank |

| PUMaC B — Combinatorics | 500 | 50% | rank |

| PUMaC B — Geometry | 500 | 50% | rank |

| PUMaC B — Number Theory | 500 | 50% | rank |

| USAJMO | 500 | 100% | award |

| CMIMC (overall) | 500 | 100% | rank |

| CMIMC — Algebra & NT | 500 | 50% | rank |

| CMIMC — Combinatorics & CS | 500 | 50% | rank |

| CMIMC — Geometry | 500 | 50% | rank |

| BAMO-12 | 500 | 100% | rank_mixed |

| MathCounts National | 500 | 100% | mathcounts |

| MPFG (→ MCP-W) | 500 | 100% | rank |

| MPFG-Olympiad (→ MCP-W) | 500 | 100% | award |

| MMATHS | 250 | 100% | rank |

| DMM | 250 | 100% | rank |

| CMM | 250 | 100% | rank |

| BAMO-8 | 250 | 100% | rank_mixed |

| BrUMO Division A | 250 | 100% | rank_mixed |

| JHMT | 250 | 100% | rank |

Contests in the database without MCP points: HMIC has no mcp_tier in contests.csv (no MCP). Math Kangaroo National (mk-national) has no tier and is record-only. MATHCOUNTS National team rosters (mathcounts-national) do not earn MCP; national ranking uses mathcounts-national-rank. See scripts/build_search_data.py and contests.csv.

10. Data Pipeline

MCP computation is a two-stage pipeline:

Stage 1: Compute mcp_rank

All result CSVs are processed using the competition configuration. For each file, the raw ranking column is read and the appropriate ranking mode is applied:

rank: numeric ranks with average-rank-for-ties.award: award strings (Gold, Silver, Bronze, HM) mapped to positional blocks.rank_mixed: numeric ranks followed by a non-numeric group (Honorable Mention for BAMO, DHM (Top 10%) for BrUMO).bmt: BMT-specific ranking (top scores, DHM, HM).mathcounts: numeric ranks + special codes (S, Q, C) treated as tied blocks.

The computed mcp_rank column is written back into the CSV.

Stage 2: Compute mcp_points and aggregate

At build time, the system:

- Loads each competition’s tier and weight from the configuration.

- For each result file, counts N (students with

mcp_rank) and computesmcp_pointsper record. Grand Slam competitions (IMO, EGMO, RMM) use award-based points (Gold/Silver/Bronze); all others use geometric interpolation. - Determines the current year per contest from the data (the most recent year with results for that contest). Time decay is applied relative to each contest’s own current year, not a single global year. This ensures that a contest whose latest data is from 2025 treats 2025 as 100% weight, even if other contests have 2026 data.

- Aggregates per-student totals:

mcp: sum of decay-weighted points from all open competitions.mcp_w:mcp+ decay-weighted MPFG/MPFG-Olympiad/EGMO points. Present for all female students.

Adding a new competition

- Add the competition to the configuration with its tier, weight, ranking mode, and rank column.

- Place result CSVs in the appropriate competition-year directory.

- Run both stages in order.

11. MCP %

MCP % (MCP contribution percentage) measures what fraction of a student’s total MCP comes from a selected set of competitions. It answers the question: “How much of this student’s ranking is driven by contest X (or contests X, Y, Z)?”

What it means

When you select one or more contests in the database’s contest filter (e.g., AMO, HMMT February, or both), MCP % shows the ratio:

\[\text{MCP}\% = \frac{\text{MCP from selected contests}}{\text{Total MCP}}\]For example, if a student has 2000 total MCP and 1200 of it comes from AMO and USAMO, their MCP % for that selection is 60%. A student whose MCP is entirely from HMMT would show 100% when HMMT is selected and 0% when only AMO is selected.

How to use it

- Identify specialization. Students with high MCP % for a given contest are heavily “specialists” in that competition — their ranking is driven largely by that event. Students with low MCP % for the same contest have diversified their results across many competitions.

- Compare contest importance. Select different contest combinations to see how much each contributes to top students’ totals. This helps understand which competitions drive the overall ranking.

- Sort and explore. The database lets you sort by MCP % (ascending or descending) when a contest filter is active. Use this to find students whose MCP is most (or least) concentrated in the selected contests.

MCP % is only meaningful when the contest filter is active. Without a filter, there is no “selected” subset, so MCP % is not computed.

12. Limitations

Our data covers only students who received official recognition at each competition — i.e., those who placed in the ranked list or received an award (Gold, Silver, Bronze, Honorable Mention, etc.). We do not have complete results for all competitors at most events.

Because of this, we use competition size (N) and dynamic min_pts in the point distribution formula (Section 3). Students whose ranks are unknown (e.g., ranks 51–2000 when only top 50 are published) receive 0 points. The algorithm uses estimated competition sizes where full data is unavailable. When full results become available, the same formula applies without recalibration.

13. Summary

MCP adapts the ATP/WTA tennis ranking model to competitive mathematics: competitions are tiered by prestige, points are assigned by placement via a power-law curve, and results are aggregated over a rolling window with geometric time decay. The system unifies rank-based and award-based competitions through a normalized mcp_rank, rewards both overall and subject-level performance, and maintains a separate MCP-W ranking for women. The database supports MCP % to analyze contest-specific contribution when filters are applied. Data coverage is limited to officially recognized students; N is competition size and min_pts is dynamic (10 for open, higher for selective competitions).

| Design Decision | Choice | Rationale |

|---|---|---|

| Model | ATP/WTA-inspired | Proven tiered system; rewards breadth, handles heterogeneous events, time-relevant |

| Tier system | 2000 / 1000 / 500 / 250 | Grand Slam for international olympiads; matches competition prestige |

| Rank normalization | mcp_rank (avg-rank-for-ties, award blocks) |

Unifies rank-based, award-based, and mixed-format competitions |

| Point formula | min + (max − min) × ((N−r)/(N−1))^k | Power-law curve with k=3; Rank 1 = max_pts; N = competition size |

| min_pts | Dynamic (10 open, higher selective) | Reflects true field size; fair across publication practices |

| IMO/EGMO/RMM | Medal-based (Gold/Silver/Bronze) | No power-law; full/75%/50% of tier; time decay applies |

| Subject tests | 50% of tier value | Overall 100%; each subject 50%; rewards specialization |

| Rolling window | 4 years | Captures a full high school career |

| Time decay | Geometric (÷2 per year) | Smooth, recency-biased, no cliff effects |

| MathCounts | No decay, no window | Middle school results are inherently time-limited |

| MPFG / EGMO | Separate MCP-W | Fairness (gender-restricted); visibility for women |

| MCP % | MCP from selected contests ÷ Total MCP | Identifies specialization; requires contest filter |

| Data coverage | Officially recognized only | Awardees receive points; others 0; N from estimated competition size |

Document History

| Version | Date | Description |

|---|---|---|

| v1 | Initial | Original MCP specification: tier-based points, power-law curve, N = number of awardees, min_pts = 50% of max_pts |

| v2 | 3/14/2026 | Point distribution upgrade: N = competition size (total participants); dynamic min_pts (10 for open, higher for selective); awardees only receive points |

| v2.1 | 5/2026 | Documented JHMT (Tier 250); noted HMIC / MK / MC roster entries without MCP |

Disclaimer

MCP is in beta and under community review. The tier assignments, point formulas, and competition inclusions are subject to change as we gather feedback. If you have suggestions for improving the MCP algorithm — including tier placements, new competitions, or formula adjustments — please reach out: mathcontestintegrity@gmail.com.