Math Competition Records

Using Data to Spot Potential Integrity Issues in Math Competitions

A short guide for coaches, administrators, and parents: how to use a public database of math competition results to check whether a student’s track record looks consistent—and when to look closer.

1. How bad is cheating in American Math Competitions?

Cheating in U.S. math competitions is a real problem, but we should not ignore the hard work of genuine, dedicated students just because there are cheaters in the system. Not every contest is equally trustworthy. Problems include:

- Leaked exams — Some tests have been sold or shared online before test day.

- Weak proctoring — School-proctored national exams can be inconsistent.

- Long testing windows — When the same test is given over many weeks (e.g., some state rounds), earlier test-takers can leak to later ones.

- Inflated cutoffs — Community reports and data suggest scores and cutoffs don’t always reflect fair play.

Some contests are much more reliable than others. Integrity in US Math Competitions rates major contests on a 1–10 scale (1 = rampant cheating, 10 = very clean), using community discussions and documented incidents. For example: USAMO, MathCounts National, and HMMT score high; AMC 10/12 and AIME have had serious, repeated integrity issues.

Bottom line: One amazing result from a contest with known problems doesn’t tell you much. Consistency across multiple high-integrity, in-person competitions is a much better sign of real ability. The database we describe below is built to help you see that consistency—or the lack of it.

2. How to use this database to spot red flags

The database pulls together public results from high-integrity, in-person contests (e.g., USAMO/USJMO, HMMT, PUMaC, CMIMC, ARML, MathCounts National). Here’s how to use it.

Look for a real track record.

Strong signal: a student shows up again and again in several high-integrity contests over multiple years (e.g., JMO then AMO, or steady HMMT/PUMaC results). Weaker signal: only one or two results, or only in contests with known integrity issues (see the integrity ratings).

Cross-check claims.

When someone claims a result in a contest that’s in the database, search the student’s name and confirm the result (contest, year, award/rank) appears. If they claim a strong result in a high-integrity contest but have no record in the database, it might be a data gap, a name spelling issue—or worth checking with official sources.

Use “anchor” contests.

Contests that are in-person, proof-based, or tightly proctored (e.g., USAMO/USJMO, HMMT, PUMaC, CMIMC, ARML, MathCounts National) are the hardest to cheat on. Use the database to see whether a student has any such results. If they have big claims elsewhere but no high-integrity record here, that’s a reason to dig deeper and ask for official verification.

Two strong red-flag patterns

1. No in-person track record, but high AMC/AIME score or JMO/AMO qualification.

Qualifying for the USA(J)MO is extremely selective: only about 250 students (up to 12th grade) qualify for the USAMO each year, and only about 250 (up to 10th grade) qualify for the USAJMO, from hundreds of thousands who take AMC/AIME. Qualification is based on AMC 12/10 and AIME scores—and AMC and AIME have well-known integrity issues, so qualification alone is not a guarantee of in-person integrity.

2. Only easier contests, then suddenly AMO gold or silver.

USAMO gold and silver are among the hardest awards in U.S. pre-college math: only about 15–20 gold and roughly 30–35 silver medalists each year nationwide (fewer than half of USA(J)MO qualifiers win any award). The exam is proof-based, spread over two days, and graded by experts. Virtually every genuine gold or silver medalist has a long, visible history of strong performance in other hard contests (e.g., prior JMO/AMO appearances, top placements at HMMT, PUMaC, or ARML). So a student who has only middling or easy-contest results in the database and then suddenly shows up as AMO gold or silver, with no progression through JMO or other high-integrity contests, is an outlier. That doesn’t by itself prove cheating, but it is a pattern that warrants closer review and, where possible, official verification. In the current AI era, that review should also consider the possibility of students illicitly using AI tools during loosely proctored or online exams: the database can’t see how a result was obtained, but it can highlight suspicious jumps that deserve human follow-up and, when appropriate, confirmation from official records.

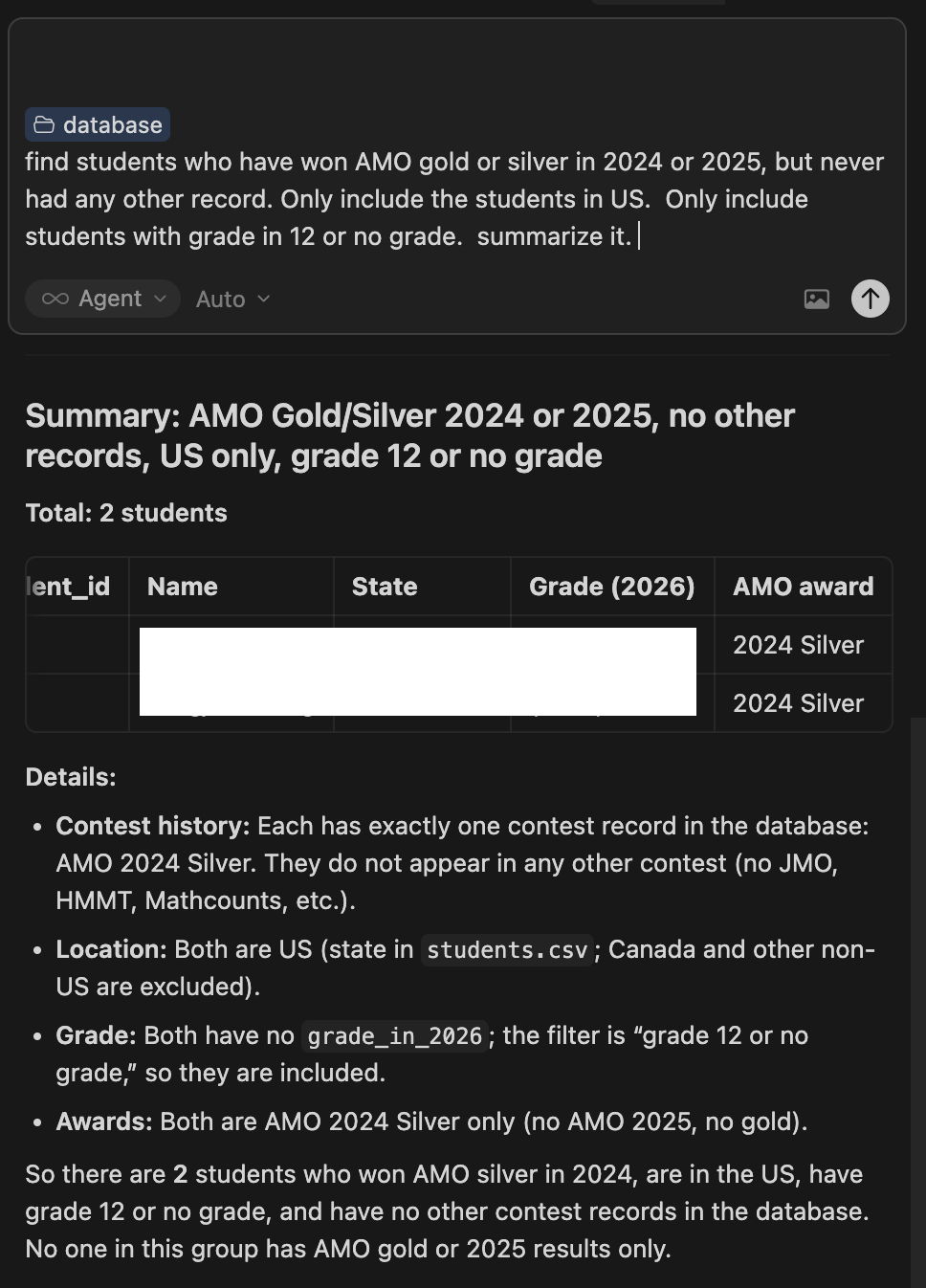

From this database, we find 22 AMO gold/silver medalists in 12th grade or with no recorded grade who have no other high‑integrity contest records—most of them silver medalists. It’s exactly the kind of outlier pattern that should prompt coaches and selectors to look more closely.

The following image only shows data in 2024 and 2025.

3. How this database is built

The project is a community effort. Results are taken from public, official sources on contest websites (including, where necessary, archived pages and PDF result lists). Volunteers add and maintain the data: students are matched across contests by name (and aliases where needed), and each contest’s results are stored by year. The searchable website is updated from this dataset so you can look up a student and see all their recorded results in one place.

Importantly, the database only includes students who received some form of official recognition (for example, qualifiers, medalists, or listed award winners). It does not include full lists of all participants or lower-scoring students who are not named in any public, official results, because those data are generally not available.

4. How to search

On the website:

Go to the search page, type a student’s name (full or partial), and hit search. You’ll see every contest result on file for that student, grouped by contest and year. You can filter by grade or “girls only” when browsing top students by number of results. There’s also an Export to PDF option so you can save a student’s record for offline review or sharing.

What to try:

- Verify one claim — Search the name and confirm the specific result (e.g., USAMO 2025 Gold, HMMT 2026) appears.

- Check consistency — If they claim a top result, see whether they also have other high-integrity contests in the same or nearby years.

- Try different spellings — Use last name only or alternate spellings; the database may have an alias listed.

- Browse top performers — The “top students by number of records” list shows who has the most results; those are often the clearest “consistent track record” cases.

5. Using AI to get more insight

You can also use AI tools to analyze the data. For example: paste a student’s results (from the site or an exported PDF) and ask the AI to summarize their trajectory—how many high-integrity contests, over how many years, and whether the progression looks consistent. You can ask it to flag students who have one very high result but almost no other high-integrity history, or to compare a student’s results to the integrity ratings. The database stays the source of truth; AI is only for summarizing, filtering, or suggesting who might be worth a closer look. Final decisions should always use official sources and human judgment.

6. Disclaimer and Limitations

What the data can’t tell you:

- It does not prove that anyone cheated. It only shows consistency (or lack of it) across high-integrity contests.

- It does not replace official verification. For high-stakes decisions (admissions, team selection, awards), always use official results when possible.

- It does not include every competition or every year. Missing data doesn’t mean a result is invalid.

Use it responsibly.

Use the database to support careful review and to decide whom to verify—not to accuse. Consistency is a positive signal; thin or inconsistent high-integrity history is a reason to look further, not a conclusion.

If you spot errors, have official result updates, or want to report suspected cheating, contact mathcontestintegrity@gmail.com.

For more on how to use the database, see How to Use This Database. For contest-by-contest integrity ratings, see Integrity in US Math Competitions.